When I first started working with cloud infrastructure, I used to believe that “real engineering” meant provisioning servers, tuning Linux kernels, configuring load balancers, and watching CPU graphs like a hawk. If a service wasn’t running on a carefully configured EC2 instance, somehow it didn’t feel serious.

Then one small feature request changed my perspective.

A product manager asked me to build a simple image-processing pipeline: whenever a user uploads a profile picture, we need to resize it into three versions. That’s it. No complex workflows. No heavy analytics. Just resize and store.

My first instinct? Spin up an EC2 instance, configure Nginx, write a background worker, add a queue, secure it, monitor it, patch it. But then I paused and asked myself a question I wish I had asked earlier in my career:

“Why am I building an entire power plant to turn on a single light bulb?”

That was the moment I truly understood the power of AWS Lambda.

The Real Problem with Traditional Servers

Before diving deep into Lambda, let’s be honest about traditional infrastructure.

When you run workloads on virtual machines like those in Amazon EC2, you are responsible for:

- Provisioning instances

- Configuring operating systems

- Applying security patches

- Monitoring CPU, memory, and disk

- Scaling up and down

- Managing high availability

Even if your application only runs once per hour.

Imagine renting an entire restaurant kitchen 24/7 just to make one cup of tea every evening. That’s how inefficient it can feel to run light, event-based workloads on always-on servers.

This inefficiency is exactly what Lambda was designed to eliminate.

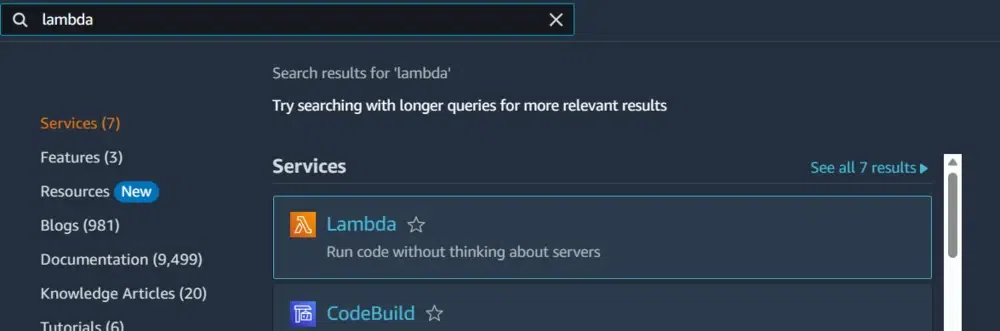

What AWS Lambda Actually Is?

AWS Lambda is a compute service that runs your code in response to events and charges you only for the time your code actually executes.

- Not for idle time.

- Not for server uptime.

- Not for provisioning capacity “just in case.”

Only for execution time.

But here’s the key insight that changed how I think about backend systems:

Lambda is not about removing servers. It’s about removing server management.

There are still servers. They just aren’t your problem anymore.

Serverless: The Most Misunderstood Word in Cloud

The term “serverless” confuses many engineers.

It doesn’t mean there are no servers. It means:

- You don’t provision them

- You don’t patch them

- You don’t scale them

- You don’t monitor the OS layer

AWS does all of that for you.

This is similar to electricity in your home. You don’t manage the power plant. You just flip the switch and pay for what you consume.

That’s the mental model.

The Event-Driven Model: The Heart of Lambda

Lambda shines when you understand one thing: it is event-driven.

Nothing runs until something happens.

The flow is simple but powerful:

- An event occurs

A file is uploaded to Amazon S3

An HTTP request hits Amazon API Gateway

A message arrives in Amazon SQS - Lambda is triggered

- Your code runs

- The result is stored or returned

That’s it.

- No always-on worker.

- No cron daemon.

- No background service consuming memory for hours.

This model completely changes how you design systems.

A Real Example: Image Resizing at Scale

Let’s revisit the image upload problem.

Traditional Approach:

- EC2 instance running a worker

- S3 bucket for storage

- Message queue

- Monitoring service

- Auto-scaling group

Even if only 10 images are uploaded per day, the infrastructure runs 24/7.

Lambda Approach:

- User uploads image to S3

- S3 triggers Lambda

- Lambda resizes image

- Stores thumbnail back to S3

No idle cost.

Automatic scaling.

Zero server management.

Now imagine Black Friday traffic where 10 images per day suddenly become 10,000 per minute.

With EC2, you panic and scale manually or rely on preconfigured scaling rules.

With Lambda? It scales automatically.

That’s not magic. It’s architecture.

Continuous, Automatic Scaling

One of the most impressive aspects of Lambda is its scaling model.

If one event comes in, it runs one execution.

If 10,000 events come in simultaneously, AWS can spin up 10,000 concurrent executions.

- No load balancers.

- No manual scaling policies.

- No capacity planning spreadsheets.

This elasticity is similar to what I discussed in my deep dive on system scalability principles in my article on Google L7 system design interview lessons (internal link).

In high-level system design interviews, interviewers care deeply about scalability models. Lambda gives you horizontal scaling by default.

That’s powerful.

The Pricing Model: Why Optimization Matters

Lambda pricing is beautifully simple:

You pay for:

- Number of requests

- Execution duration (in milliseconds)

- Allocated memory

There’s a generous free tier:

- 1 million requests per month

- 400,000 GB-seconds compute time

But here’s something many beginners miss:

If your function runs for 10 seconds, you pay for 10 seconds.

If you optimize it to run in 200 milliseconds, your bill drops dramatically.

I once refactored a data transformation function that was running in 3.5 seconds. After optimizing memory allocation and removing blocking I/O, it dropped to 400 ms.

The cost difference at scale was massive.

Serverless doesn’t remove the need for optimization.

It makes optimization directly visible in your bill.

Anatomy of a Lambda Function

Here’s a minimal Python example:

def lambda_handler(event, context):

print("Received event:", event)

message = "Hello, " + event.get("key1", "World")

return {

'statusCode': 200,

'body': message

}

There are three key components:

1. The Handler

This is the entry point. AWS calls this function.

2. The Event Object

Contains trigger data:

- HTTP body (API Gateway)

- File details (S3)

- Stream records (Kinesis)

3. The Context Object

Contains runtime info:

- Function name

- Memory allocation

- Remaining execution time

Understanding these three is enough to start building production-ready functions.

Real-World Use Cases (Beyond Hello World)

1. Serverless APIs

Using API Gateway + Lambda, you can build REST APIs without managing servers.

For startups, this is revolutionary. No DevOps overhead. Just deploy and run.

2. Real-Time Stream Processing

When working with streaming systems like I discussed in my analysis of LinkedIn’s Kafka replacement system (internal link), event-driven compute becomes extremely relevant.

Lambda can consume data from streams and process:

- IoT telemetry

- Financial transactions

- Log pipelines

3. ETL Pipelines

Instead of provisioning Spark clusters for small jobs, lightweight transformations can run in Lambda.

4. Scheduled Jobs

Using Amazon EventBridge, you can run cron-like jobs in the cloud.

Daily report at midnight?

Resource cleanup every hour?

Automated backups?

No server required.

Monitoring and Observability

Many people assume serverless means less monitoring.

Wrong.

You still need visibility.

With Amazon CloudWatch you can track:

- Invocation count

- Execution duration

- Error rate

- Throttling

- Memory usage

You can also set alarms for thresholds.

For deeper observability, teams integrate:

- Datadog

- New Relic

Serverless reduces infrastructure complexity, not engineering responsibility.

When NOT to Use AWS Lambda

Lambda is powerful, but it’s not a silver bullet.

Avoid it when:

- You need long-running processes (over 15 minutes)

- You require full OS-level control

- You have extremely latency-sensitive workloads

- You need predictable, sustained high compute usage

For steady 24/7 heavy workloads, EC2 or container services may be more cost-effective.

This reminds me of infrastructure trade-offs I explored in my article about moving away from Terraform (internal link). Every architectural decision is about trade-offs.

Lambda is amazing — in the right context.

Lambda vs Microservices Thinking

In my Netflix scalability case study on Monolith vs Microservices (internal link), I explored how breaking systems into small services enables independent scaling.

Lambda takes that idea further.

Each function becomes:

- Small

- Single-purpose

- Independently scalable

It’s microservices without managing containers.

But design discipline still matters:

- Keep functions small

- Avoid heavy cold-start dependencies

- Design idempotent handlers

- Handle retries properly

Cold Starts: The Real Trade-Off

Let’s talk honestly.

Lambda sometimes introduces cold starts.

When a function hasn’t run recently, AWS must initialize a container environment. That adds latency.

For most applications, this delay (tens to hundreds of milliseconds) is acceptable.

For ultra-low latency financial trading systems?

Maybe not.

Architecture is always about understanding constraints.

Case Study: Startup MVP vs Enterprise System

Startup Scenario

A 3-person startup builds:

- API Gateway

- Lambda backend

- DynamoDB storage

- S3 for assets

They launch globally with minimal operational overhead.

- No DevOps hire needed initially.

- No patching.

- No capacity planning.

Enterprise Scenario

A fintech company processes millions of transactions per minute.

They may use:

- Lambda for event ingestion

- Containers for heavy computation

- EC2 for specialized workloads

Hybrid architecture.

Lambda becomes one tool in a broader toolbox.

The Psychological Shift

The biggest impact of Lambda isn’t technical.

It’s mental.

You stop thinking in terms of servers.

You start thinking in terms of events.

Instead of:

“Where should I host this service?”

You ask:

“What event should trigger this logic?”

That shift leads to cleaner architectures.

Final Thoughts

AWS Lambda isn’t just a cloud feature.

It represents a philosophical change in backend engineering:

- Infrastructure becomes invisible

- Billing aligns with execution

- Scaling becomes automatic

- Architecture becomes event-driven

When used correctly, it allows you to focus purely on business logic.

When misused, it can introduce complexity and unexpected costs.

The key is understanding trade-offs — something every serious engineer must master.

If you’re preparing for advanced system design interviews or architecting scalable systems, serverless is no longer optional knowledge. It’s foundational.

And if there’s one lesson I learned from my journey:

Don’t build power plants when you only need a light bulb.

Design for the event.

Pay for execution.

Scale by default.

Netflix Just Proved Monoliths Might Scale Better Than Microservices (Oops 😅)